At least 12 U.S. states are moving on AI hiring legislation. The EU AI Act's full enforcement timeline for high-risk systems started August 2, 2026. New York City just completed an enforcement audit of its AI hiring law — and the results signal that tighter crackdowns are coming.

For HR leaders still treating AI hiring compliance as a future concern, the calendar has moved up. The patchwork of laws governing how AI can be used in recruiting decisions is no longer theoretical. Fines are being assessed. Lawsuits are being filed. And the federal vacuum that left employers navigating inconsistent state rules is not closing anytime soon: the U.S. Senate voted 99 to 1 to remove a proposed federal AI moratorium, leaving compliance decisions firmly at the state and local level.

This article breaks down the five most consequential laws and regulations HR leaders need to understand in 2026 — what each requires, who is covered, and what the penalties look like.

Key Takeaways:

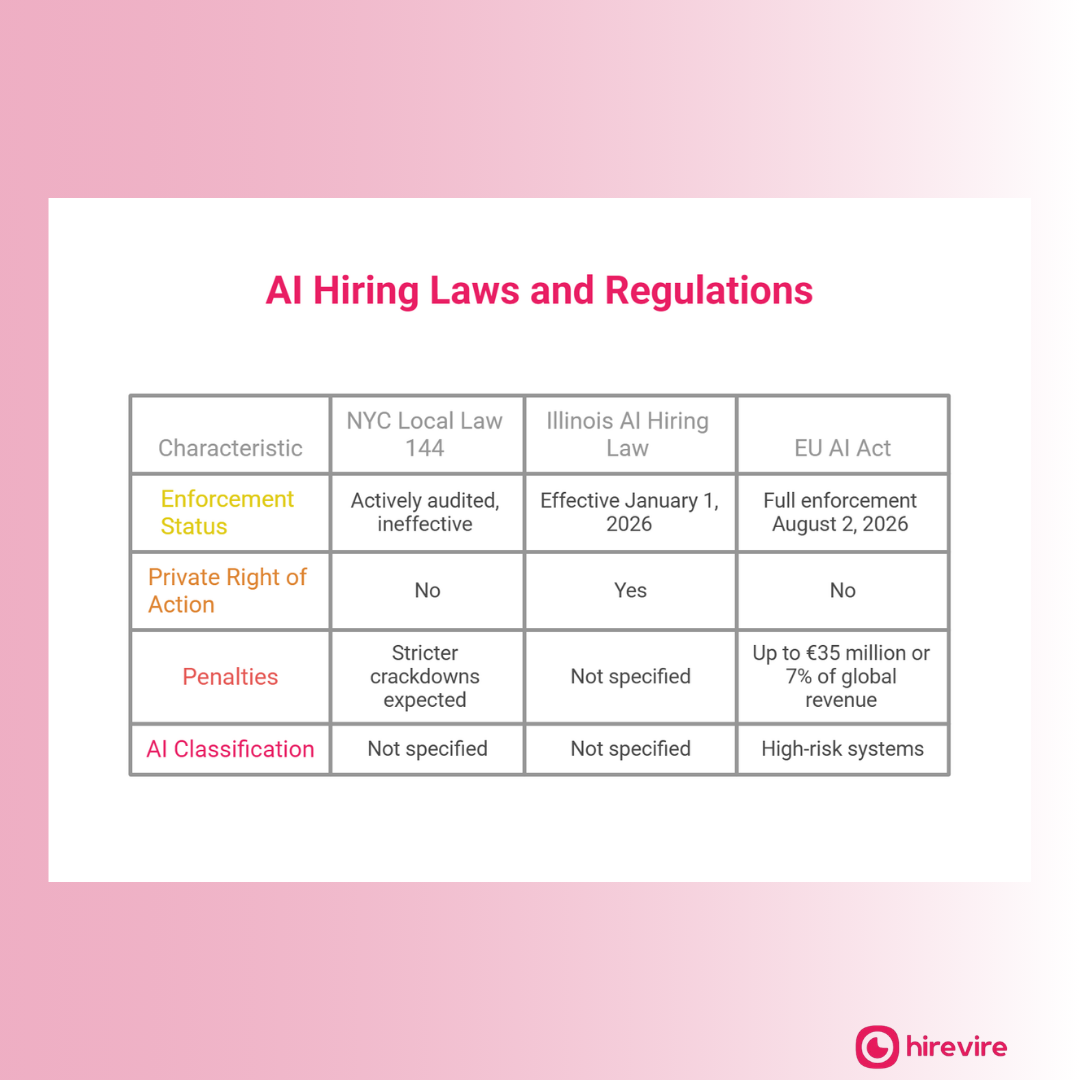

- NYC Local Law 144 is now being actively audited, with enforcement described as "ineffective" — meaning stricter crackdowns are expected

- Illinois' AI hiring law (effective January 1, 2026) is the only U.S. law giving candidates a private right of action against employers

- The EU AI Act classifies hiring tools as high-risk systems with fines up to €35 million or 7% of global revenue

- Hirevire's async video screening model keeps humans in the decision seat, providing the auditable, transparent process regulators are requiring

Disclaimer: This article is for informational purposes only and does not constitute legal advice. HR leaders should consult qualified legal counsel for guidance specific to their jurisdiction and circumstances.

Why AI Hiring Laws Are Accelerating in 2026

The legislative push on AI hiring is not happening in a vacuum. Workday and Amazon both faced AI employment bias claims in 2025, cases that drew public and regulatory attention to how algorithmic decision-making in hiring can systematically disadvantage protected classes. Those cases created political pressure for legislative action that had previously stalled.

The result is a growing body of law that shares common themes even if the specific requirements differ: transparency in how AI is used, some form of bias auditing or assessment, and meaningful human involvement in consequential hiring decisions. Understanding the five most significant laws now means HR leaders can design processes that satisfy multiple regulatory frameworks rather than patching compliance one jurisdiction at a time.

Law 1: New York City Local Law 144 - The Audit Requirement Now Under Scrutiny

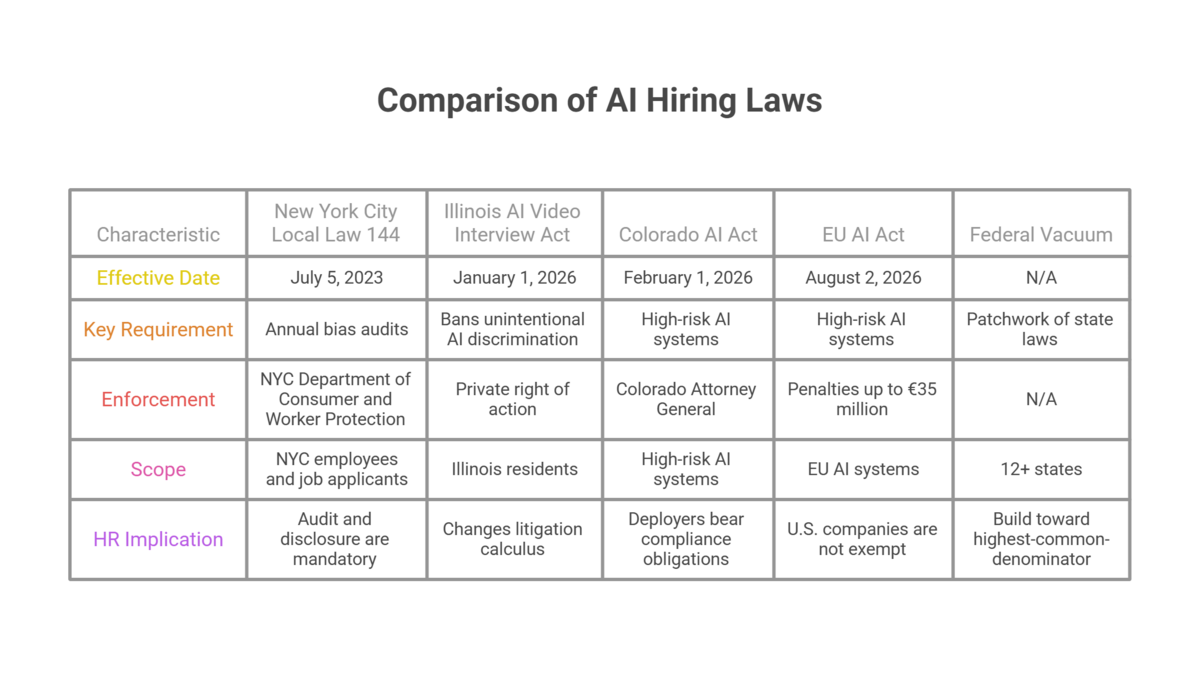

New York City's Local Law 144 of 2021, which took effect July 5, 2023, requires employers using automated employment decision tools (AEDTs) in hiring or promotion decisions affecting NYC employees or job applicants to conduct annual bias audits performed by independent auditors. Employers must publish audit results and provide notice to candidates that an AEDT is being used.

The law is enforced by the NYC Department of Consumer and Worker Protection. Penalties run from $500 to $1,500 per day per violation.

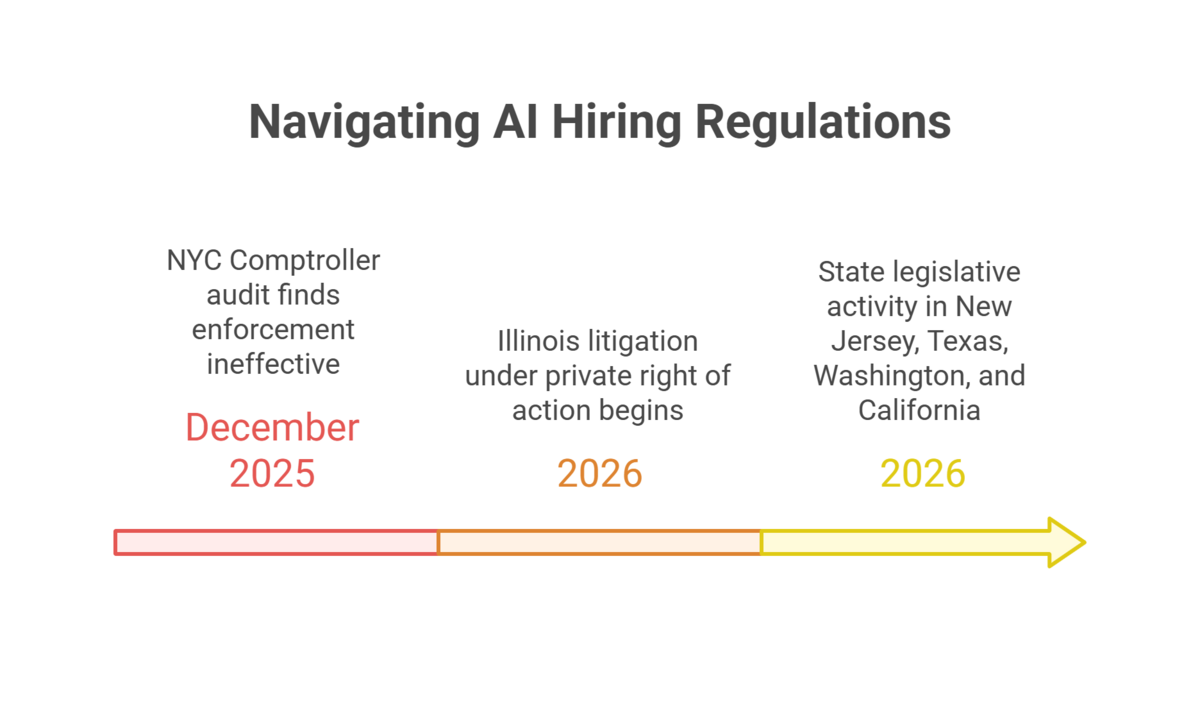

The enforcement picture became significantly more complicated in December 2025. The New York State Comptroller's Office released an audit finding that enforcement of Local Law 144 has been "ineffective," with limited active monitoring and few penalties actually assessed. A DLA Piper analysis published in January 2026 concluded that the audit signals increased enforcement risk for employers — specifically, that the findings are likely to prompt the city to increase scrutiny, not relax it.

For HR teams: if your company uses any AEDT to screen or evaluate NYC-based candidates or employees, the audit and disclosure requirements are not optional. The period of lax enforcement appears to be ending.

What qualifies as an AEDT under Local Law 144 is broad: any computational process that uses machine learning, statistical modeling, data analytics, or artificial intelligence to substantially assist or replace discretionary decision-making in assessing candidates for employment. Many standard ATS tools with AI-powered screening capabilities fall within this definition. Companies that have not audited their vendor stack for AEDT status should do so before the enforcement environment tightens further.

Law 2: Illinois Artificial Intelligence Video Interview Act and 2026 Amendment

Illinois has been ahead of the AI hiring compliance curve since 2020. The original Artificial Intelligence Video Interview Act (820 ILCS 42) required employers using AI to analyze video interviews to notify candidates, explain how the AI works, and obtain consent before recording. It also prohibited sharing video content with third parties without consent.

Effective January 1, 2026, a significant amendment took effect that HR leaders at every company hiring Illinois residents need to understand. The amendment bans AI discrimination in hiring even when that discrimination is unintentional. More consequentially, it adds a private right of action — meaning candidates who believe they were discriminated against through AI hiring tools can sue the employer directly in court, without needing to first file a complaint with a government agency.

Illinois is the only U.S. state with this provision. The practical implication is substantial: it changes the litigation calculus for every employer using AI in hiring decisions affecting Illinois workers. Companies can no longer rely on the absence of regulatory enforcement as protection.

Stinson LLP's analysis of state AI hiring laws identifies Illinois as setting the high-water mark for candidate rights in AI hiring — and notes that other states may follow its private right of action model.

Law 3: Colorado Artificial Intelligence Act - High-Risk AI in Hiring

Colorado's Artificial Intelligence Act, signed into law in May 2024 and with key provisions taking effect February 1, 2026, covers "high-risk AI systems" — a category that explicitly includes AI used to make consequential decisions about employment, including hiring and termination.

Under the Colorado law, developers and deployers of high-risk AI systems are required to:

- Use reasonable care to protect consumers from known or reasonably foreseeable risks of algorithmic discrimination

- Conduct and document impact assessments

- Implement risk management policies and programs

- Provide notice when high-risk AI is used in a consequential decision

- Allow individuals to appeal or request human review of adverse AI-driven decisions

The law is enforced by the Colorado Attorney General. Violations can result in civil penalties. There is no private right of action (unlike Illinois), but the documentation requirements are substantial — employers deploying AI screening tools need to maintain records demonstrating compliance with the impact assessment and risk management requirements.

For companies using AI tools from third-party vendors in Colorado hiring: deployers (not just developers) bear compliance obligations. Vendor contracts should address who is responsible for conducting impact assessments and maintaining required documentation.

Law 4: EU AI Act - High-Risk Classification for All Hiring Tools

The EU AI Act, which entered into force August 1, 2024, classifies AI systems used for recruitment and selection of persons — including screening and evaluation of candidates — as high-risk AI systems under Annex III. Full compliance obligations for high-risk systems became enforceable August 2, 2026.

The requirements for high-risk AI in hiring under the EU AI Act include:

- Risk management systems and technical documentation

- High-quality training data requirements with measures to address bias

- Human oversight mechanisms built into the system

- Logging and record-keeping of system operation

- Registration in the EU database of high-risk AI systems (for systems used by public entities; requirements for private employers are tied to the general market provisions)

Penalties under the EU AI Act for non-compliance with obligations applicable to high-risk AI systems reach €35 million or 7% of annual worldwide turnover, whichever is higher.

U.S. companies are not exempt. The EU AI Act applies to any AI system deployed within the EU, regardless of where the provider is based. U.S. companies that recruit EU-based candidates, operate in EU markets, or use AI tools developed in the EU face compliance obligations. Greenberg Traurig's analysis specifically addresses the cross-jurisdictional implications for U.S. employers.

For most SMBs and mid-market companies, the immediate practical step is determining whether their AI hiring tool vendors are conducting the required risk assessments and documentation. Third-party tool providers that sell into EU markets should be treating compliance as a vendor obligation — but employers retain responsibility for ensuring the tools they use are compliant.

Law 5: The Federal Vacuum - 12+ State Laws, No Unified Standard

The U.S. federal government's approach to AI hiring regulation has been defined by absence. The U.S. Senate voted 99 to 1 to remove a proposed federal AI moratorium, signaling that federal legislation setting a national AI standard is unlikely in the near term.

The result is what legal experts describe as a growing patchwork: 12+ states have either passed AI hiring legislation or have active bills advancing through legislative chambers. Beyond Illinois and Colorado, notable state-level activity includes Maryland (which enacted the Maryland AI in Employment Decisions Act requiring notice to candidates), New Jersey (proposed legislation), and New York State (separate from NYC Local Law 144, with proposed state-level requirements).

Stinson LLP's comprehensive review of state AI hiring laws notes that the specific requirements vary significantly — some focus on bias auditing, others on transparency and notice, others on human review requirements. Companies with employees or applicants across multiple states face the compliance burden of satisfying overlapping and sometimes inconsistent standards.

The practical response for HR leaders is to build toward the highest-common-denominator standard — the requirements that, if met, satisfy the most demanding jurisdiction's rules. That typically means: documented human oversight of AI-assisted decisions, candidate notice when AI tools are used, some form of bias or impact assessment, and a process that allows candidates to request human review.

The federal vacuum also creates strategic uncertainty. Companies that invest heavily in AI hiring tools optimized for one regulatory environment may find those tools non-compliant when new state laws pass or federal standards eventually emerge. Building toward human-supervised, transparent processes reduces regulatory risk across jurisdictions while maintaining compliance flexibility as the legal landscape continues to evolve.

How Hirevire Helps HR Leaders Navigate AI Hiring Compliance

As compliance requirements tighten around AI in hiring, the fundamental question regulators are asking is: who makes the decision? Laws like NYC Local Law 144, Illinois's AI Video Interview Act, Colorado's AI Act, and the EU AI Act all push in the same direction — toward transparent, auditable, human-supervised hiring decisions.

Hirevire is built on a model that satisfies this standard. Candidates record async video, audio, or text responses to structured questions. Recruiters and hiring managers watch those responses and make decisions. There is no black-box algorithm generating a pass/fail score. There are no automated rejections. Every screening decision is made by a human, with the candidate's response on record.

Auditable by design. Every Hirevire screening interaction is logged, shareable, and reviewable. If an HR team needs to demonstrate how a screening decision was made — to an auditor, a regulator, or a candidate who requests review — the evidence is there.

Transparent to candidates. Candidates know exactly what they're submitting and to whom. There's no algorithmic evaluation happening in the background. That transparency is specifically what regulators under NYC Local Law 144, Illinois law, and the EU AI Act are requiring employers to provide.

Human oversight built in. Because recruiters evaluate responses directly, Hirevire doesn't create the AI decision-making layer that triggers most compliance requirements. It's a structured screening tool, not an automated decision system — a distinction that matters significantly for companies managing compliance across multiple jurisdictions.

Try Hirevire free and see how your team can build a screening process that holds up to regulatory scrutiny.

Action Steps: Building a Compliance-Ready Hiring Process

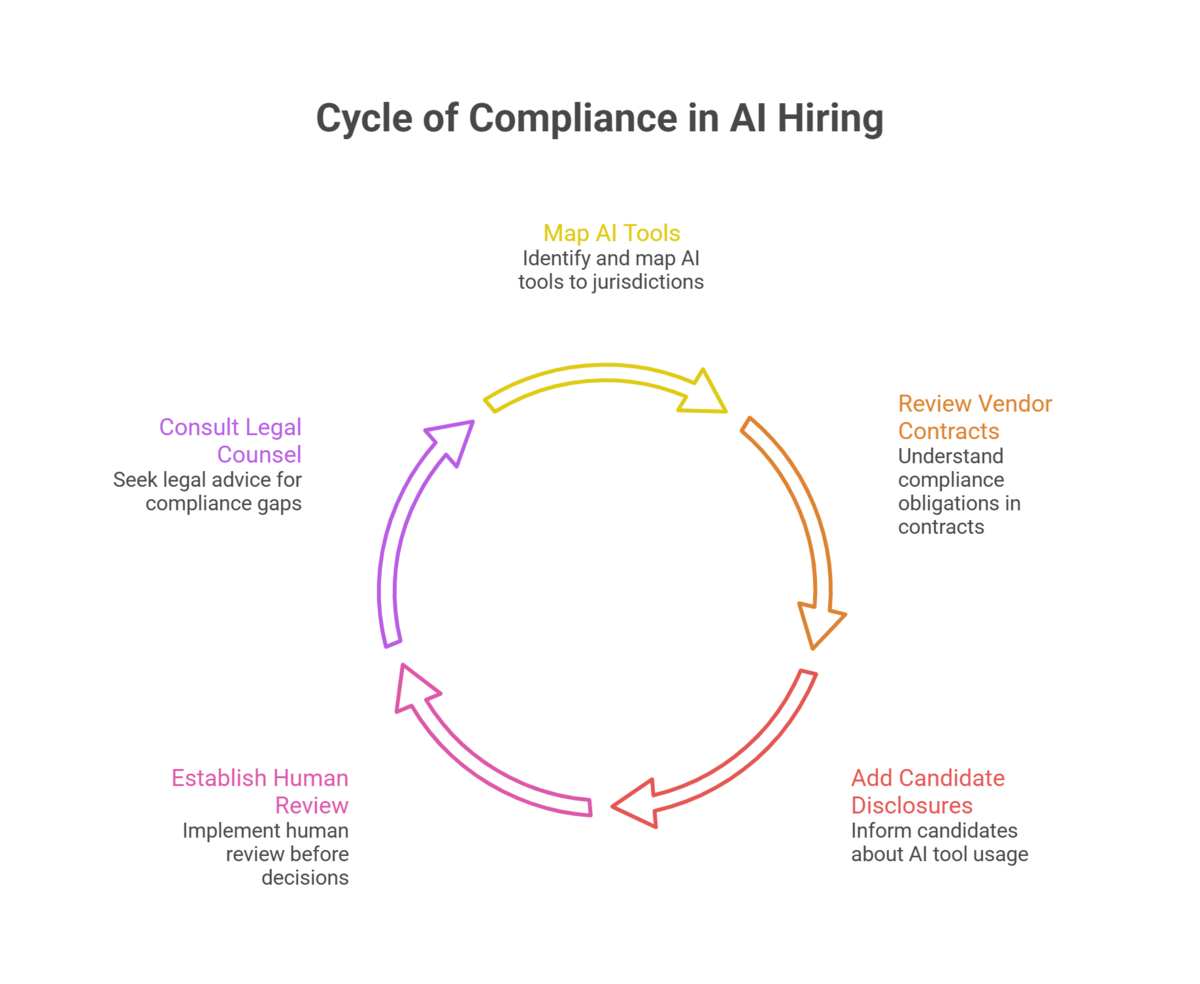

Step 1: Map Your AI Tools to Jurisdictions

Document every AI tool used in your hiring process — ATS systems, resume screening tools, video analysis software, assessment platforms — and map each to the jurisdictions where you hire. This inventory is the foundation of any compliance program and the starting point for determining which laws apply to your operations.

Step 2: Review Vendor Contracts for Compliance Obligations

Most AI hiring tools are provided by third-party vendors. Review contracts to understand who bears compliance obligations — for bias audits, impact assessments, and documentation. In many cases, deployer obligations (your company) and developer obligations (the vendor) overlap. Ensure contracts specify which party is responsible for what.

Step 3: Add Candidate Disclosure Notices

Several laws — including NYC Local Law 144 and Illinois's AI Video Interview Act — require notifying candidates when AI tools are used in hiring decisions. Implement standard disclosure language in job postings and application confirmation emails that describes what AI tools are used and how they inform decisions.

Step 4: Establish Human Review Checkpoints

Design your screening process so that human review occurs before any adverse decision reaches a candidate. Document these checkpoints. For companies using AI scoring or filtering, this means building in a step where a recruiter reviews AI recommendations before rejections are sent. This single process change addresses requirements under Colorado, Illinois, and the EU AI Act simultaneously.

Step 5: Consult Legal Counsel Now, Not After a Complaint

The compliance window for several of these laws has already opened. Companies that have not yet assessed their AI hiring practices against the applicable legal requirements in their operating jurisdictions should prioritize that assessment. The cost of proactive compliance is significantly lower than the cost of responding to a complaint, audit finding, or lawsuit.

Many HR teams discover during this assessment that their ATS vendors have not proactively communicated their compliance obligations under these laws. Vendors may provide tools that technically fall within regulatory scope without flagging the deployment-side requirements that fall on the employer. Legal counsel with AI employment law expertise can identify these gaps before they become enforcement issues.

Frequently Asked Questions

Which AI hiring law has the highest penalties?

The EU AI Act carries the largest potential penalties: up to €35 million or 7% of annual global revenue for non-compliance with obligations applicable to high-risk AI systems. In the U.S., NYC Local Law 144 currently provides for $500-$1,500 per day per violation, which compounds quickly across multiple candidates.

Does my company need to comply with NYC Local Law 144 if we're not based in New York City?

Yes, if your company uses automated employment decision tools to screen or evaluate candidates who are located in New York City, or current NYC employees being considered for promotion. The law applies based on where the candidate or employee is located, not where the employer is headquartered.

What is a private right of action, and why does Illinois's law matter?

A private right of action means individuals can file lawsuits directly against employers without first going through a government agency. Illinois's 2026 amendment to its AI Video Interview Act gives candidates this right if they believe AI discrimination occurred in a hiring decision. Most other U.S. AI hiring laws rely on government enforcement. Illinois is the exception, and it significantly raises the litigation exposure for companies using AI hiring tools affecting Illinois workers.

What does "human oversight" mean under AI hiring law requirements?

Different laws define it differently, but the common thread is that a human being with appropriate authority must be able to review, override, or be accountable for decisions made with AI assistance. Under the Colorado AI Act, deployers must provide affected individuals the ability to appeal or request human review. Under the EU AI Act, human oversight mechanisms must be built into the system's design. A process where humans review AI recommendations before decisions are communicated to candidates generally satisfies this requirement.

Is async video interviewing covered by AI hiring laws?

It depends on how the tool functions. If the platform uses AI to analyze video content and generate a score, rating, or recommendation (as HireVue and similar tools do), it is likely covered by laws targeting AEDTs or AI-assisted decisions. If the platform simply records and displays candidate responses for human review (as Hirevire does), it does not generate AI decisions and is not typically subject to the same requirements. The distinction matters practically.

What should HR leaders do if they operate across multiple states with different AI laws?

Build to the most demanding standard. Identify the requirements that are strictest across your operating jurisdictions — typically Illinois's consent and anti-discrimination provisions, Colorado's impact assessment requirements, and NYC's bias audit requirements — and design your process to satisfy all of them. Companies that build to the highest common denominator once avoid retrofitting compliance as new laws pass.

Will federal AI hiring regulation pass?

The 99-1 Senate vote against the proposed federal moratorium suggests federal preemption of state laws is not imminent. Most legal analysts expect the state-by-state patchwork to continue growing rather than consolidate. Companies should plan for ongoing state-level legislative activity rather than waiting for a federal standard to simplify compliance.

What to Watch Next

Illinois litigation under the private right of action. The first cases filed under Illinois's 2026 amendment will clarify what "AI discrimination" means in practice and what the damages exposure looks like. Early cases will significantly inform how companies using AI tools in Illinois hiring assess their risk.

NYC enforcement intensification following the Comptroller audit. The December 2025 audit finding that enforcement was "ineffective" creates political pressure to demonstrate results. Companies that have not completed required bias audits or published required disclosures should treat this as urgent — not as a future concern.

State legislative activity in 2026. New Jersey, Texas, Washington, and California all have active AI hiring legislation in various stages. The specific requirements being proposed vary, but the general direction — toward transparency, human oversight, and bias accountability — is consistent. HR leaders monitoring new developments should track whether state bills include private rights of action (the provision with the highest litigation risk).

The regulatory environment will continue tightening. Companies that build transparent, human-supervised hiring processes now will adapt to new requirements more easily than those that continue deploying opaque algorithmic systems.

Conclusion

The era of unregulated AI in hiring is ending. NYC is auditing. Illinois candidates can now sue. Colorado requires documented impact assessments. The EU Act is in effect. The federal response is a vacuum that state legislatures are filling one law at a time.

HR leaders who treat AI hiring compliance as a legal checkbox are missing the strategic point. The laws are converging on a common standard: transparency, human oversight, and accountability for AI-assisted decisions. Companies that build processes meeting that standard aren't just avoiding fines — they're building trust with candidates at a moment when candidate trust is at an all-time low.

Hirevire's human-signal screening model is designed for exactly this environment: async video responses evaluated by recruiters, no algorithmic scoring, full auditability. That's not just good practice — it's where regulation is heading.