Summary:

Choosing the right pre-hire assessment is crucial for effective hiring. This guide outlines four main types: skills-based, cognitive, personality, and situational judgment tests, each suited to different roles. It emphasizes the importance of matching assessment type to role requirements and suggests using video pre-screening to filter candidates before incurring assessment costs. This approach ensures that assessments are used efficiently and effectively, improving hiring outcomes.

Table of Contents

The 4 Types of Pre-Hire Assessments

Why Assessment Type Matters More Than Tool Choice

Skills-Based Assessments - When and Why

When Skills Assessments Are the Right Choice

Fairness Profile of Skills Assessments

Cognitive Ability Tests - Pros, Cons, Fairness

What Cognitive Tests Actually Measure

When Cognitive Tests Justify Their Cost

Personality Assessments for Culture Fit

How Personality Assessments Are Used in Hiring

Which Personality Traits Actually Predict Job Performance

Situational Judgment Tests for Role-Specific Screening

Assessment Selection Framework by Role Type

Decision Tree: Choosing Your Assessment Type

When to Use Multiple Assessments

Why Video Pre-Screening Should Come Before Any Assessment

What Video Pre-Screening Filters

What Video Pre-Screening Doesn't Replace

Setting Up Video Pre-Screening with Hirevire

Which assessment is most accurate for predicting job performance?

Are cognitive ability tests legal to use in hiring?

How do personality assessments differ from cognitive tests?

What is a situational judgment test and how is it different from a case study interview?

How long should a pre-hire assessment be?

Can I use multiple assessment types for the same role?

How do I know if my assessment is working?

What should come before any formal assessment?

How do I handle candidates who refuse to take assessments?

Are there assessments that work well for remote hiring?

Conclusion: Choosing the Right Assessment for Your Hiring Process

You've narrowed your candidate pool to 20 people. Now you need to figure out who can actually do the job. A colleague recommends a cognitive test. Your ATS vendor is pushing a personality assessment. You've seen a case study about situational judgment tests. And your budget is $50 per candidate.

The problem isn't a shortage of assessment options - it's that most guides on this topic tell you what each assessment does without helping you decide which one fits your specific situation. This guide works differently.

According to SHRM research, organizations that use structured pre-hire assessments reduce mis-hires by up to 36% compared to interviews alone. But that figure assumes the right assessment is being used for the right role. An ill-matched assessment wastes candidate time, inflates costs, and produces data that doesn't predict job performance.

This is a decision guide. It covers the four main pre-hire assessment types, when each is appropriate, the fairness and legal landscape for each, and a role-based decision framework to help you choose. It also makes the case for why video pre-screening should come before any formal assessment - and why skipping that step costs far more than it saves.

Quick Summary: The four main pre-hire assessment types (skills-based, cognitive, personality, and situational judgment tests) each serve different purposes and fit different roles. Hirevire provides a Step 0 before any of these - a low-cost async video pre-screen that filters mismatched candidates before the $50-per-candidate assessment spend begins.

Table of Contents

- The 4 Types of Pre-Hire Assessments

- Skills-Based Assessments - When and Why

- Cognitive Ability Tests - Pros, Cons, Fairness

- Personality Assessments for Culture Fit

- Situational Judgment Tests for Role-Specific Screening

- Assessment Selection Framework by Role Type

- Why Video Pre-Screening Should Come Before Any Assessment

- FAQ

The 4 Types of Pre-Hire Assessments

Pre-hire assessments are structured tools used to evaluate candidates before a hiring decision. They differ from interviews in that they're standardized - every candidate in a given hiring round takes the same assessment under the same conditions, producing comparable outputs.

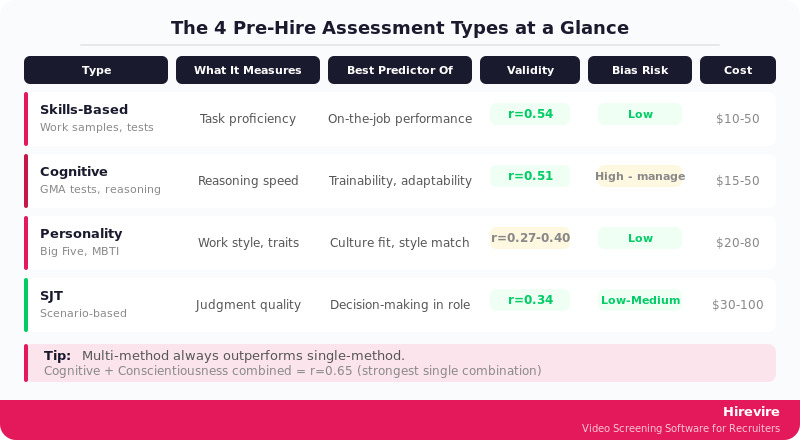

The four main types split cleanly by what they measure:

| Assessment Type | What It Measures | Best Predictor Of | Typical Cost |

|---|---|---|---|

| Skills-based | Job-specific technical ability | On-the-job performance for defined tasks | $10-$50/candidate |

| Cognitive ability | General reasoning and problem-solving speed | Trainability and adaptability across roles | $15-$50/candidate |

| Personality | Behavioral traits and working style preferences | Culture fit, role-specific success patterns | $20-$80/candidate |

| Situational judgment | Judgment under realistic work scenarios | Decision quality in context | $30-$100/candidate |

Each has strengths, limitations, and appropriate use cases. None is universally best. The right choice depends on the role, the volume of candidates, the stage of the funnel at which the assessment is deployed, and how the data will be used in the hiring decision.

Why Assessment Type Matters More Than Tool Choice

A common mistake is selecting an assessment vendor before deciding what to measure. This leads to using whatever comes bundled with your ATS or recommended by a sales team, regardless of fit.

The sequence should be:

- Identify what predicts success in this specific role

- Choose the assessment type that measures that predictor

- Then select a tool within that type

A cognitive ability test measures something real - but if the role doesn't require fast learning of novel concepts, that measurement won't predict performance. A personality assessment captures genuine tendencies - but if the role has narrow behavioral requirements (e.g., data entry accuracy), personality is a weak predictor.

Skills-Based Assessments - When and Why

A skills-based assessment measures a candidate's current proficiency at a specific task or set of tasks directly relevant to the role. The assessment either simulates job work (work samples) or tests knowledge of tools and processes used in the job.

What Skills Assessments Cover

Skills assessments range from narrow single-skill tests to broader multi-domain evaluations:

- Technical coding tests: Candidates solve programming problems in a timed environment. Tools like HackerRank and Codility are common for software engineering roles.

- Writing samples: Candidates produce a piece of written work (email, proposal, report summary) and are evaluated against a rubric. Used for content, sales, and communications roles.

- Tool proficiency tests: Candidates complete tasks in real software - Excel modeling, Salesforce workflow setup, Adobe Illustrator tasks.

- Data analysis exercises: Candidates receive a dataset and answer business questions. Common for analyst and operations roles.

- Work sample tests: Candidates complete an abbreviated version of actual job work - a customer support ticket response, a brief financial model, a product design mockup.

When Skills Assessments Are the Right Choice

Skills assessments are highest-value when:

- The role has clearly defined technical requirements with a right/wrong dimension

- Poor performance on the skill is a genuine disqualifier (not just a concern)

- The assessment task is representative of real job work (not a proxy)

- Candidate pool is too large to determine skill depth from resumes alone

They're weaker when:

- The skill is trainable in under 4 weeks (then coachability matters more than current level)

- The "skill" being measured is actually judgment, which doesn't map to right/wrong test formats

- The assessment is so long it introduces significant candidate drop-off

Fairness Profile of Skills Assessments

Skills assessments tend to have better fairness profiles than cognitive tests when properly designed. Because they measure task performance directly rather than proxying through abstract reasoning, there's less opportunity for background or education level to produce systematic score differences unrelated to job capability.

The critical caveat: work samples that favor candidates from certain academic programs or companies can still introduce bias. Blind scoring (reviewing outputs without candidate names or backgrounds visible) mitigates this.

Validity note: Well-designed work sample tests have among the highest predictive validity of any hiring tool (r=0.54 in meta-analyses), outperforming unstructured interviews (r=0.38) and cognitive tests alone (r=0.51). The challenge is design quality - a poorly constructed skills test produces neither valid nor fair results.

Practical Limits

Skills assessments require design investment. Off-the-shelf tests exist for common technical skills (coding, Excel, typing speed), but role-specific work samples must be built internally. That takes time. For high-volume, fast-moving hiring, the design overhead can be a bottleneck.

The best pre-employment assessment tools article covers skills-based platforms in detail.

Cognitive Ability Tests - Pros, Cons, Fairness

Cognitive ability tests measure general mental ability (GMA) - the capacity to process information, reason through novel problems, and learn quickly. They're among the most researched hiring tools in industrial-organizational psychology.

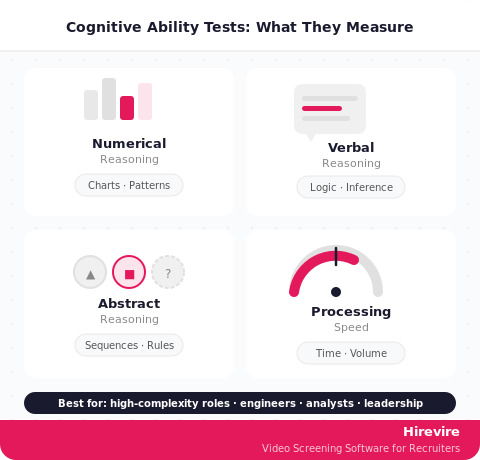

What Cognitive Tests Actually Measure

Cognitive tests don't measure what someone knows. They measure how quickly and accurately someone can reason. A candidate might answer questions involving:

- Numerical reasoning (interpreting charts, calculating percentages, identifying patterns in data)

- Verbal reasoning (reading passages, drawing logical inferences, identifying argument flaws)

- Abstract reasoning (completing shape sequences, identifying rules in pattern sets)

- Processing speed (volume of correct responses within a time limit)

The output is a score that correlates with general learning ability - how fast someone will acquire job knowledge, adapt to changing requirements, and perform on novel tasks.

When Cognitive Tests Justify Their Cost

Cognitive ability has the highest predictive validity of any single hiring tool for complex, knowledge-intensive roles (r=0.51 to 0.65 in research by Frank Schmidt and colleagues). It predicts who will learn fastest in jobs with high cognitive demand.

Appropriate uses:

- High-complexity roles: Software engineers, management consultants, financial analysts, researchers - roles where novel problem-solving is daily work

- Management and leadership pipelines: Cognitive ability predicts leadership effectiveness in organizations where strategic thinking is valued

- High-volume professional roles: When 200+ candidates are being evaluated for a competitive program and cognitive demand is genuinely high

Questionable uses:

- Routine operational roles: A warehouse supervisor role with well-defined procedures doesn't require the same cognitive load as a strategy analyst position. Using GMA tests here over-screens and reduces diversity without improving hire quality.

- Creative roles: Cognitive tests poorly predict creative performance - originality and lateral thinking don't correlate well with numerical reasoning speed.

The Fairness Problem

Cognitive ability tests consistently show the largest mean score differences between demographic groups of any pre-hire assessment type. Research consistently finds meaningful score gaps by race and socioeconomic background.

This doesn't mean cognitive tests are inherently biased in intent - but it does mean their use in hiring has disparate impact implications that must be carefully managed:

- Organizations using cognitive tests should conduct adverse impact analyses periodically

- Tests should be validated against actual job performance data, not just assumed to predict it

- Combining cognitive tests with other assessment types reduces both adverse impact and prediction error

- Using cognitive tests as a hard cutoff (above X score = advance; below X = reject) amplifies disparate impact significantly more than using them as one factor in a profile

The honest assessment: cognitive tests are among the most powerful predictors of job performance for the right roles, and among the most legally and ethically complex tools to use at scale.

Personality Assessments for Culture Fit

Personality assessments measure stable behavioral tendencies - how a person typically approaches work, interacts with others, handles pressure, and makes decisions. The most commonly used framework in hiring is the Big Five (Openness, Conscientiousness, Extraversion, Agreeableness, Emotional Stability).

How Personality Assessments Are Used in Hiring

Personality data in hiring is typically used in one of three ways:

1. Profile matching: The organization defines what personality profile correlates with success in the role (often from top performer analysis), then assesses candidates against that profile.

2. Culture fit screening: Assessments identify candidates whose working style and values align with the team or organization's operating norms.

3. Interview guide generation: Personality data surfaces areas worth exploring in structured interviews rather than being used as a direct filter.

The third use is the most defensible. Using personality as a hard filter (excluding all candidates who score below X on conscientiousness, for example) is legally risky and validity-limited.

Which Personality Traits Actually Predict Job Performance

Not all Big Five dimensions predict job performance equally:

| Trait | Predictive for... | Validity |

|---|---|---|

| Conscientiousness | Almost all roles | Moderate-high (r=0.31) |

| Emotional Stability | High-stress, customer-facing roles | Moderate (r=0.22) |

| Extraversion | Sales, leadership, customer success | Role-dependent |

| Openness | Creative, research, strategy roles | Role-dependent |

| Agreeableness | Team-heavy, collaborative roles | Low overall, context-dependent |

Conscientiousness - the tendency toward diligence, reliability, and goal-directed behavior - is the only Big Five trait that consistently predicts performance across role types. The others matter in specific contexts.

Limitations and Misuse

Personality assessments are widely misused in hiring:

- Faking is common. Candidates who understand what they're being assessed on can shift their responses toward the desired profile. Unlike cognitive tests, personality assessments have no right or wrong answers, making score manipulation easier.

- Low overall validity for complex roles. Personality alone explains relatively little variance in job performance (r=0.27-0.40 depending on trait and role). Combined with other assessment types, it adds incremental value.

- Cultural context matters. Personality trait expression varies across cultures. Extroversion means different things in different cultural contexts, which can disadvantage candidates from backgrounds where assertive self-presentation isn't the norm.

- Legal risk in pass/fail use. Using personality scores to eliminate candidates requires validation evidence. Several organizations have faced EEOC scrutiny over personality-based screening that had disparate impact without job-relatedness evidence.

Personality assessments work best as one data point in a multi-method assessment approach - particularly valuable when combined with structured interviews that probe the dimensions the personality data flags as relevant.

For a broader view of assessment tools by role, see the best talent assessment tools for small firms resource.

Situational Judgment Tests for Role-Specific Screening

A situational judgment test (SJT) presents candidates with realistic work scenarios and asks them to choose or rank responses based on what they'd actually do. Unlike personality assessments, SJTs evaluate judgment quality in context - not just what a person tends to do, but what they'd do when facing the specific challenges of the role.

How SJTs Work

A typical SJT scenario might look like:

"A client calls your manager to complain that your project team has missed a key deadline. Your manager asks you to prepare a response. You know the delay happened because a colleague submitted late work and didn't flag the problem. What would you be your first step?"

Response options might include:

- A) Address the client professionally while avoiding attribution of blame

- B) Provide a full account of what happened, including your colleague's late submission

- C) Ask your manager for guidance before responding

- D) Propose a revised timeline and commit to preventing future delays

Each option reflects a different judgment priority. The "correct" answer depends on what the organization actually values and what the role requires.

When SJTs Are the Best Tool

SJTs are highest-value for:

- Customer-facing roles: How someone handles complaints, difficult customers, or ambiguous situations is hard to measure any other way before hiring

- Management and team lead roles: Handling team conflict, performance conversations, and competing priorities can be simulated effectively through SJT scenarios

- Compliance-heavy roles: Healthcare, legal, financial services - roles where judgment failures have real consequences benefit from scenario-based screening

- Roles where values alignment matters: When the key differentiator between good and excellent performance is how someone navigates gray areas, SJTs capture that in a way personality scores don't

SJT Fairness Profile

SJTs generally show smaller mean group differences than cognitive tests. Because they're framed around realistic scenarios rather than abstract reasoning, they're less sensitive to variations in test-taking familiarity. They're not bias-free - scenarios written from a single cultural context can disadvantage candidates from other backgrounds - but they have a more favorable fairness profile than GMA tests.

Validity note: SJTs have moderate predictive validity (r=0.34) for job performance, with higher validity when scenarios are developed from actual critical incidents in the role. Generic SJTs purchased off the shelf are less predictive than role-specific ones.

The Design Challenge

Like work sample tests, high-quality SJTs require upfront design investment. The scenarios need to come from real role challenges (often through interviews with current job holders or critical incident analysis), and scoring keys need to be validated against what actually differentiates top from average performers in the organization.

Off-the-shelf SJTs exist for common role types (customer service, healthcare, management), but their validity for a specific organizational context is never guaranteed without local validation.

Assessment Selection Framework by Role Type

With four assessment types in hand, the practical question is: which one (or combination) should you use for a given role? This framework maps role characteristics to assessment choices.

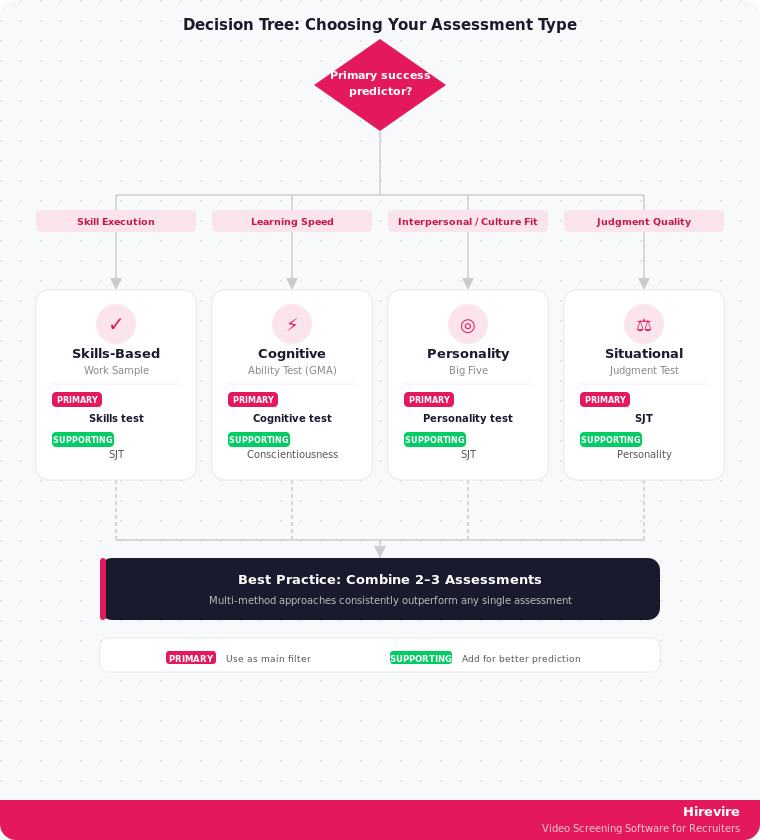

Decision Tree: Choosing Your Assessment Type

Start here: What is the primary predictor of success in this role?

If: Specific technical skill execution (coding, financial modeling, writing, data analysis)

- Primary: Skills-based assessment / work sample

- Supporting: SJT if judgment component exists

- Skip: Personality as primary filter; cognitive if skill is clear differentiator

If: Learning speed and adaptability in complex knowledge work

- Primary: Cognitive ability test

- Supporting: Personality (Conscientiousness specifically)

- Note: Adverse impact analysis required; combine with other measures

If: Interpersonal effectiveness, culture alignment, working style

- Primary: Personality assessment (Big Five)

- Supporting: SJT for role-specific scenarios

- Skip: Cognitive as primary; skills test unless role-specific technical req. exists

If: Judgment quality under realistic role pressures

- Primary: Situational judgment test

- Supporting: Personality (Conscientiousness, Emotional Stability)

- Skip: Cognitive unless role has high novel problem-solving demand

Role-Type Mapping Table

| Role Category | Primary Assessment | Supporting | Skip |

|---|---|---|---|

| Software engineer | Skills (coding test) | Cognitive | Personality as filter |

| Customer success manager | SJT | Personality | Cognitive |

| Sales representative | Personality | SJT | Cognitive |

| Data analyst | Skills (analysis) | Cognitive | Personality as filter |

| Operations manager | SJT | Cognitive | Standalone personality |

| Content/copy writer | Skills (writing sample) | Personality (openness) | Cognitive |

| Finance/accounting | Skills (modeling) | Cognitive | Standalone SJT |

| HR generalist | Personality | SJT | Coding/technical |

| Executive/leadership | Cognitive | Personality + SJT | Single-method only |

| Customer support (high-volume) | SJT | Personality | Cognitive |

When to Use Multiple Assessments

For roles where the cost of a mis-hire is high and the candidate pool is sufficiently large, combining two assessment types predictably outperforms either alone. Research on assessment combinations consistently shows:

- Cognitive + Conscientiousness: validity r=0.65 (stronger than either alone)

- Skills test + SJT: strong for roles with both technical and judgment requirements

- Cognitive + Work sample: strong for professional roles with clear technical outputs

The practical constraint is cost and candidate experience. A $200/candidate assessment battery that 40% of candidates drop out of is worse than a $30 assessment with 85% completion. Completion rate matters.

Why Video Pre-Screening Should Come Before Any Assessment

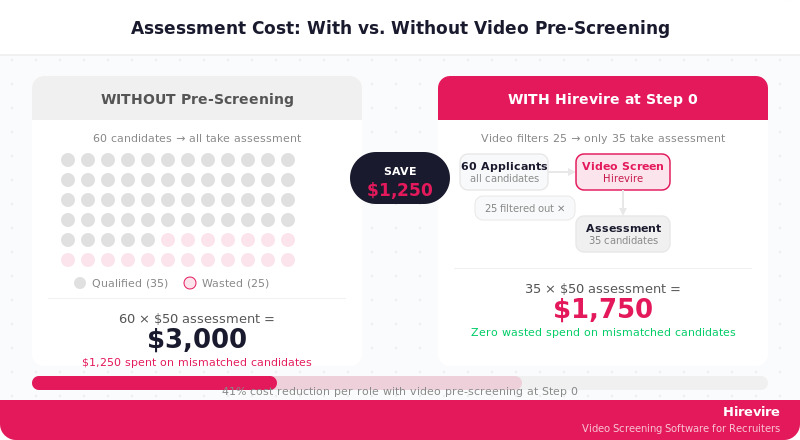

Here's the number that changes the calculus on assessment spend: at $50 per candidate for a cognitive test, screening 60 applicants costs $3,000. If 30 of those candidates were never realistically going to be offered the role - wrong salary expectations, wrong availability, early indicators of poor communication - that's $1,500 spent generating useless data.

This is the problem that async video pre-screening solves. Before spending $50-$100 per candidate on formal assessments, Hirevire lets you collect 2-3 minute video responses to structured questions, at a fixed monthly cost starting at $39/month with no per-interview fees within plan limits.

What Video Pre-Screening Filters

An async video pre-screen at Step 0 surfaces:

- Salary and availability mismatches: Basic questions catch these in the first response

- Communication clarity: For roles where articulate communication matters, a 2-minute video response is more diagnostic than any resume. You'll know immediately.

- Role understanding: Candidates who fundamentally misunderstand what the job involves reveal that quickly on video

- Motivation and fit signals: Enthusiasm and genuine interest come through in video in ways that structured assessments miss entirely

- Soft skills baseline: Evaluating skills through asynchronous video interviews provides evidence that video pre-screening can effectively assess communication, presence, and professionalism pre-assessment

The Math

Consider a role with 60 applicants:

- Without video pre-screening: All 60 take a $50 cognitive test = $3,000. Assume 25 had misaligned expectations or poor communication - that's $1,250 wasted.

- With Hirevire at Step 0: Video pre-screen filters 25 mismatched candidates before assessment spend. Only 35 take the $50 test = $1,750. Cost reduction: $1,250 on one role.

At Hirevire's Essentials plan ($39/month billed annually), that saving funds the platform for 32 months. For a team running 5+ roles per month, the math is significantly more favorable.

What Video Pre-Screening Doesn't Replace

Video pre-screening is Step 0, not Step Everything. It doesn't replace:

- A skills test for a role where technical proficiency is the primary qualifier

- A cognitive assessment for a role where learning speed is genuinely predictive

- A personality assessment when working style alignment is a real organizational need

What it does is ensure that the formal assessment budget is spent on candidates who have already cleared basic qualification and communication filters. That's a fundamentally different proposition than sending the full applicant pool into an expensive assessment battery.

For more on assessing soft skills before any formal assessment stage, see Hirevire's guide on assessing soft skills before live interviews.

The best recruitment assessment tools comparison covers leading platforms in detail.

Setting Up Video Pre-Screening with Hirevire

Hirevire creates a shareable link with your pre-screen questions. Candidates record responses asynchronously - no scheduling, no calendar coordination. Recruiters review recordings at 2x speed, share standouts with hiring managers, and move qualified candidates to the formal assessment stage.

The AI layer in Hirevire adds match assessments against your custom criteria - flagging candidates whose responses align with what you've defined as important before you spend a minute reviewing recordings in depth.

Plans start at $39/month (Essentials, billed annually), with no per-interview fees within plan limits - up to 300 interviews/year on Essentials, 1,200 on Professional ($99/month billed annually), and 12,000 on Agency ($199/month billed annually).

Ratings: G2 4.7/5 (25+ reviews) - View on G2 | Capterra 5/5 (20+ reviews) - View on Capterra | AppSumo 4.9/5 (70+ reviews)

FAQ

Which assessment is most accurate for predicting job performance?

No single assessment type has universal predictive superiority. Research shows work sample tests (r=0.54) and cognitive ability tests (r=0.51) have the highest validity overall, but both are role-dependent. Work samples predict best when the assessed task directly mirrors job work. Cognitive tests predict best in high-complexity, knowledge-intensive roles. Combinations consistently outperform single-method approaches.

Are cognitive ability tests legal to use in hiring?

Yes, cognitive ability tests are legal to use in hiring in most jurisdictions, but they require careful management. The key legal requirement in the US (under the Uniform Guidelines on Employee Selection Procedures) is that any selection tool showing adverse impact on a protected class must be validated as job-related. Organizations using cognitive tests should: (1) conduct adverse impact analyses regularly, (2) have validation evidence linking the test to job performance, (3) avoid using cognitive scores as hard cutoffs without validated justification.

How do personality assessments differ from cognitive tests?

Personality assessments measure stable behavioral tendencies (how someone typically acts), while cognitive tests measure reasoning ability (how fast and accurately someone can think). They predict different things: personality is more useful for culture and working-style fit; cognitive is more useful for learning speed and adaptability in complex roles. They're not interchangeable. Combining them provides more predictive power than either alone.

What is a situational judgment test and how is it different from a case study interview?

An SJT presents pre-written scenarios with response options - candidates choose or rank what they would do. A case study interview is an open-ended problem where candidates construct their own analysis and recommendations. SJTs are standardized (every candidate gets the same scenarios) and can be administered at scale. Case study interviews allow follow-up and deeper exploration but require interviewer time and are harder to score consistently.

How long should a pre-hire assessment be?

Research consistently shows assessment completion drops off significantly beyond 25-30 minutes. For most hiring situations:

- Skills tests: 20-45 minutes (task complexity determines this, not arbitrary time limits)

- Cognitive tests: 12-20 minutes (most validated tools are designed for this range)

- Personality assessments: 15-25 minutes

- SJTs: 20-35 minutes

Longer isn't more predictive. Completion rate matters - an 80% completion rate on a 20-minute test produces more useful data than a 45% completion rate on a 45-minute test.

Can I use multiple assessment types for the same role?

Yes - multi-method assessment consistently outperforms single-method. The practical limits are cost and candidate experience. For mid-to-senior roles where assessment costs are justified, combining a skills test with an SJT or a cognitive test with a personality measure is appropriate. For high-volume entry-level roles, keep the assessment lightweight and let video pre-screening and structured interviews do more of the work.

How do I know if my assessment is working?

Assessment effectiveness should be tracked against downstream outcomes:

- Predictive validity: Do high scorers perform better 6 and 12 months after hire?

- Completion rate: Are candidates completing the assessment? Drops below 60% signal usability problems.

- Adverse impact: Are pass rates significantly different across demographic groups? If yes, investigation and remediation are required.

- Hiring manager satisfaction: Are the candidates assessment-qualified candidates who advance performing well in interviews?

Without tracking these metrics, you can't know whether your assessment is adding value or just adding friction.

What should come before any formal assessment?

An async video pre-screen. Before spending $50-$100 per candidate on formal assessments, basic filters should eliminate clearly mismatched candidates: salary expectations, availability, communication baseline, and role understanding. Hirevire handles this at Step 0 for a fixed monthly cost - ensuring your formal assessment budget is spent on genuinely promising candidates, not the full applicant pool.

How do I handle candidates who refuse to take assessments?

Assessment refusal rates vary significantly by role type and candidate seniority. Senior candidates (10+ years of experience, narrow specialties) have higher refusal rates because they have more alternatives. For roles where assessment is non-negotiable, explain why the specific assessment was chosen and how it's used in the decision. Arbitrary or poorly explained assessment requirements drive the highest refusal rates. For executive roles, consider whether the assessment is genuinely necessary or whether structured interviews and reference checks are more appropriate.

Are there assessments that work well for remote hiring?

All four assessment types described in this guide can be administered remotely. The practical considerations for remote assessment:

- Cognitive tests: Use proctored options for high-stakes roles; unproctored for lower stakes where cheating risk is acceptable

- Skills tests: Work sample tasks are highly adaptable to remote formats - candidates submit their output asynchronously

- Personality: No change from in-person administration

- SJTs: Platform-based administration is the norm; remote makes no practical difference

Async video pre-screening with Hirevire is inherently remote-native - there's no in-person equivalent.

Conclusion: Choosing the Right Assessment for Your Hiring Process

The question "which assessment should you use for the hiring process?" doesn't have a universal answer. It has a framework:

- Identify the primary performance predictor for the role (technical skill, learning speed, working style, or judgment)

- Match that predictor to the assessment type with the strongest validity for it

- Run an async video pre-screen at Step 0 to filter the pool before assessment spend begins

- Use assessment data as one input among several - never as the sole decision criterion

The most common mistake is using whatever assessment is easiest to procure rather than what's most predictive. The second most common mistake is sending the full applicant pool through a $50/candidate assessment before filtering out obvious mismatches.

Key Takeaways

- Skills-based assessments work best when technical proficiency is directly measurable and a genuine disqualifier

- Cognitive tests are highly predictive for complex, knowledge-intensive roles - but carry adverse impact risks that require careful management

- Personality assessments add real value as a supporting input, not a standalone filter

- SJTs are underused and often the best tool for roles where judgment under realistic conditions is the primary differentiator

- Video pre-screening at Step 0 consistently improves ROI on formal assessment spend by filtering mismatched candidates before costs are incurred

Your Next Steps

- Identify the primary success predictor for your next open role

- Match it to the appropriate assessment type using the framework in this guide

- Set up Hirevire as your Step 0 filter - reduce assessment costs and improve funnel quality before the first formal test is sent

Ready to add a Step 0 to your assessment process?